Since TrueDelta promptly updates its Car Reliability Survey results four times a year, we can report on new models ahead of anyone else. Last year, we announced that the 2009 Jaguar XF was faring poorly. This provoked a blistering backlash from owners at a particular Jaguar forum. In the end, threads on reliability were deleted and future ones all but banned in the interest of preserving what remained of the UK auto industry.

The outraged owners argued that TrueDelta’s results could not be correct, since Jaguar had just been declared the most dependable make by J.D. Power. I pointed out that the VDS covers the third year of ownership, 2006 in that case, and that Jaguar had discontinued, redesigned, or replaced every model in its line save the XJ in the interim. So the results did not apply to the XF, or the current XK for that matter.

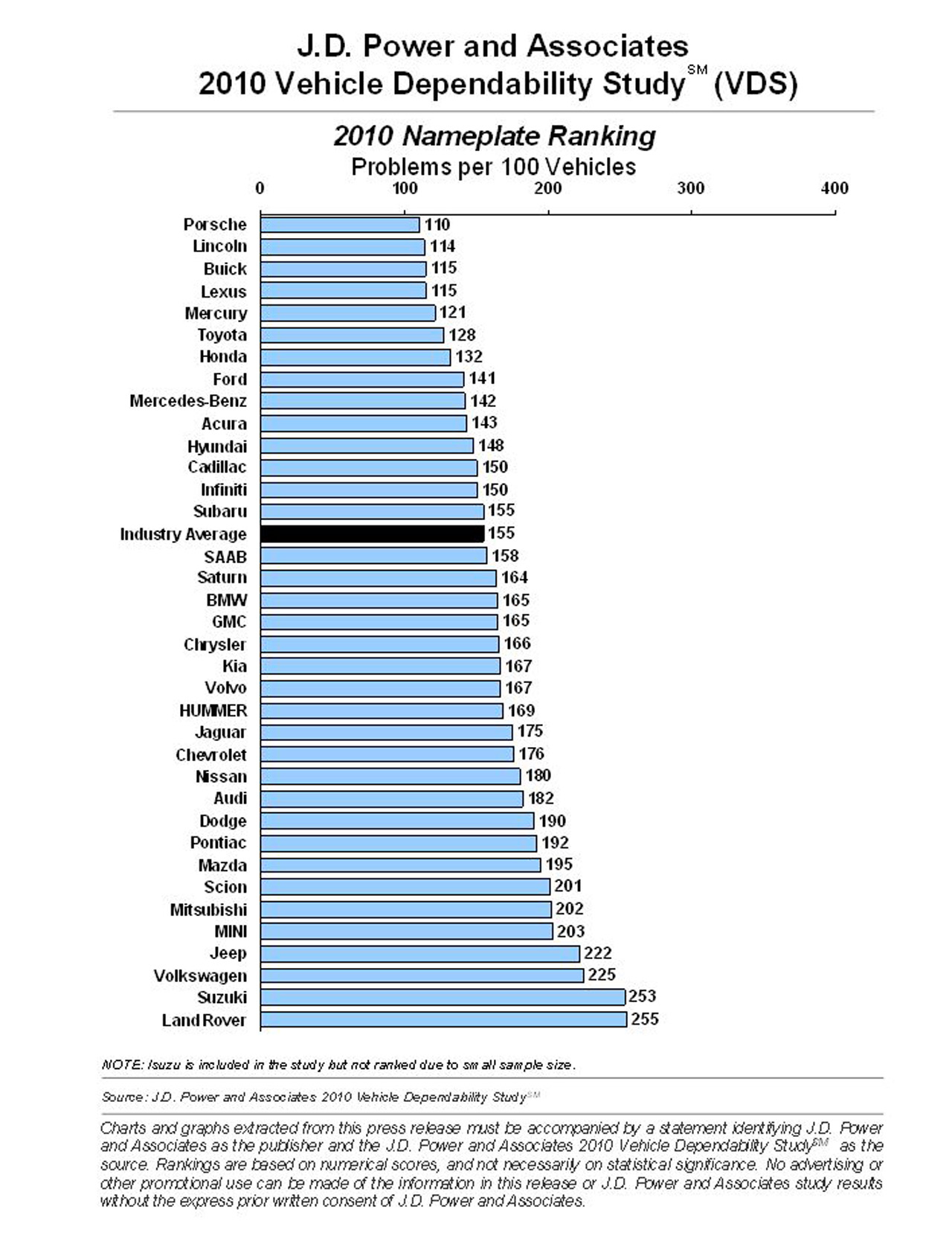

Well, J.D. Power has now released the 2010 Vehicle Dependability Survey (VDS), which covers 2007s in their third year of ownership, and, as predicted, the redesigned XK has, all by its lonesome, sunk Jaguar’s ranking from 1st to 23rd. And it’ll only get uglier once the XF is reflected in these stats in another two years.

#1 this year: Porsche. Many people will wonder how Porsche fared so well. One likely factor: Porsches are often weekend cars that aren’t driven much. J.D. Power might consider doing what TrueDelta does, and post average odometer readings. A larger factor: THERE WAS NO 2007 CAYENNE—Porsche skipped straight from 2006 to 2008. The Cayenne is likely more troublesome than the sports cars, and is certainly driven more. So don’t expect a top VDS score for Porsche next year, when the Cayenne is again part of the mix.

“Long term” for J.D. Power continues to mean “the third year of ownership.” It used to mean the fifth year, but manufacturers have little use for fifth-year data, and this survey primarily exists to serve manufacturers willing to pay large sums for detailed results.

Many car buyers, though, are much more interested in how cars fare after the 3/36 warranty ends. J.D. Power has no information for them, hoping that car buyers will accept third-year problem frequencies as a sufficient indicator of how a car will perform over the long haul. Unfortunately, in many cases it is not. TrueDelta’s data suggest that all too often cars take a turn for the worse either soon after the warranty ends or after 100,000 miles.

As usual, the public gets brand-level scores rather than model-level scores from J.D. Power. Brand-level scores are of limited use for a car buyer, and can actually misinform as much as they inform. After all, people don’t buy the entire line. They buy a particular model. And the scores of models can vary widely within a brand.

Much is made of which brands did better this year (Porsche, Lincoln), and which did worse (Jaguar). Well, as noted above, the brand averages can be heavily influenced by the introduction of a single new design or the absence of a single old design.

For these and other reasons a focus on model-level scores would be much more valid and useful.

Also worth noting: as in the past most makes are tightly bunched around the average, 155 problems per 100 cars this year. Consumer Reports considers any score within 20 percent of the average in its own survey to be “about average.” Applying this metric to J.D. Power’s results, 21 of the 36 brands are “about average.”

J.D. Power notes that for Cadillac, Ford, Hyundai, Lincoln, and Mercury perceptions of reliability lag reality. No surprise, since (as I’ve found all too often) people often judge (and more often than not reject) data based on how these data fit their perceptions rather than judging their perceptions based on how they fit the data.

J.D. Power’s explicit solution: convince consumers of gains in reliability. The implicit solution: pay to include VDS results in your ads. But are perceptions based on the VDS any more likely to be correct? Or, as seen in the Porsche and Jaguar cases, are they just as often part of the problem?